Optimizing API Calls: Integry's Lazy Sync Patterns for Agents, Integrations, and MCPs

AI is only as good as the data and latency you give it. The trick is serving both without DoSing your partners.

TL;DR

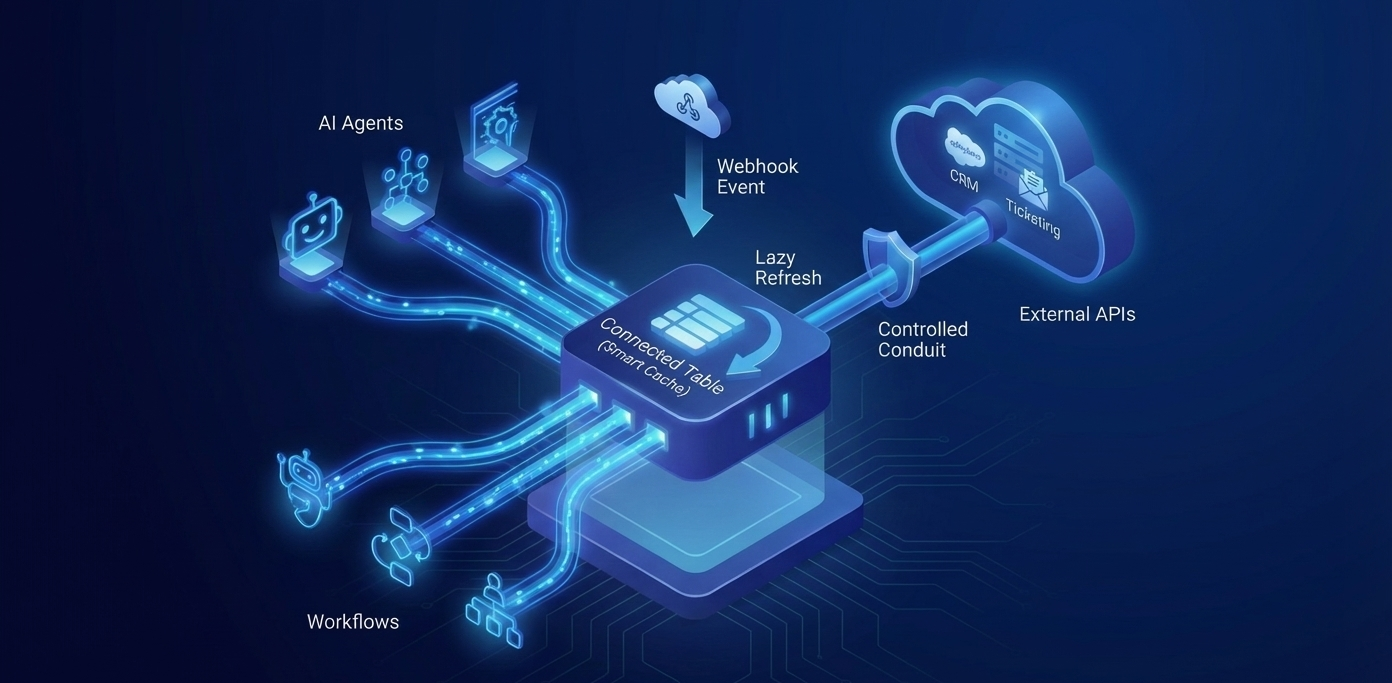

Most agent stacks and traditional workflow runners still hammer third‑party APIs with constant polling and re‑indexing. We're introducing local Connected Tables at Integry with webhook‑driven invalidation and just‑in‑time refresh for APIs that act as data sources. Hot reads return instantly from a local store, and cold or stale rows are refreshed on demand, which keeps user experience snappy while dramatically reducing rate‑limit pressure and cost for both agents and scheduled workflows. The implementation of connected tables is abstracted from the workflow and agents, occurring transparently and automatically behind the scenes.

The Problem: Great AI and Busy Workflows, Terrible Syncs

AI agents and workflows are only as useful as the data they can access. Without fresh, structured data from the systems where work happens, AI cannot make informed decisions or automate meaningful tasks. Similarly, complex workflows that orchestrate actions across multiple platforms depend on accurate, up-to-date information to function correctly.

However, the traditional approach to keeping this data fresh creates significant problems. Most systems rely on one of two patterns:

- Constant polling: Repeatedly fetching data from third-party APIs on a fixed schedule, regardless of whether anything has changed

- On-demand fetching: Making API calls every time an agent or workflow needs data, leading to redundant requests

Both approaches have serious drawbacks:

- Rate limit exhaustion: APIs have strict rate limits. Excessive polling or redundant fetches quickly hit these limits, causing 429 errors and service disruptions

- High costs: Many APIs charge per request. Unnecessary calls directly increase operational expenses

- Poor performance: Waiting for API responses on every request adds latency, making agents and workflows feel sluggish

- Stale data: Even with polling, there's always a window where data is out of date between poll intervals

The fundamental issue is that these patterns treat all data access the same way, without considering whether data has actually changed or how frequently it's accessed. This creates unnecessary load on both your systems and your API partners.

The Solution: User/Agent-Driven Lazy Sync via Connected Tables

Connected Tables solve these problems by introducing a smart caching layer that sits between your agents or workflows and third-party APIs. Instead of constantly polling or making redundant requests, Connected Tables maintain a local cache that's intelligently updated based on actual usage and change events.

Here's how it works:

- Initial data import: When you first connect to an API, Connected Tables perform an initial import of the relevant data into a local cache

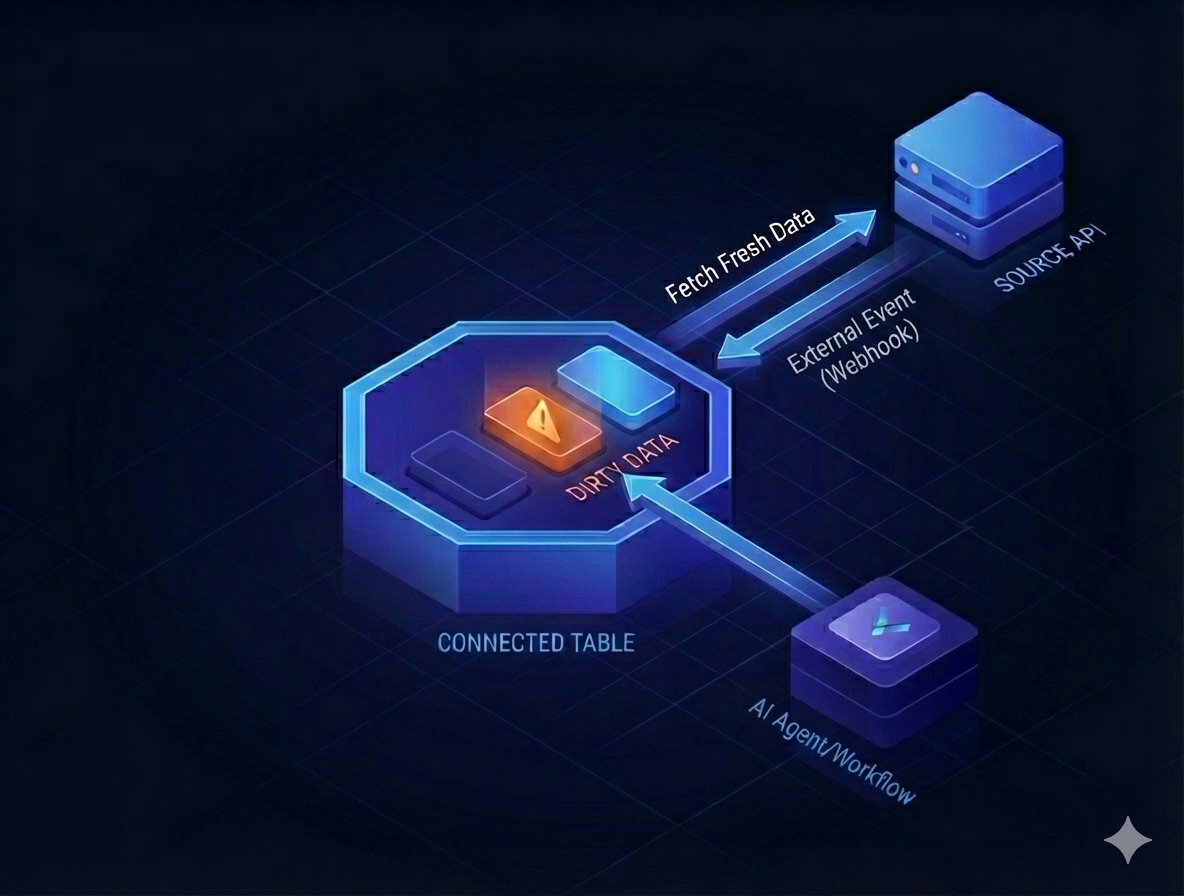

- Webhook-driven invalidation: When data changes in the source system, webhooks notify Connected Tables to mark specific records as "dirty" (potentially stale)

- Lazy refresh on access: When an agent or workflow requests data, Connected Tables check if the cached version is clean. If it's dirty or missing, only then do they fetch fresh data from the API

- Instant responses: Clean cached data is returned immediately, providing sub-millisecond response times

This approach combines the best of both worlds: the freshness of real-time data with the performance of local caching. You only make API calls when necessary, dramatically reducing rate limit pressure and costs while maintaining data accuracy.

Key Benefits

Our implementation of Connected Tables has delivered measurable improvements:

- 85%+ cache hit rate: The vast majority of data requests are served from cache, eliminating API calls entirely

- 60-90% fewer API calls: By only fetching when data is actually stale, we've reduced API usage by more than half in most cases

- 42% lower timeouts and errors: Reduced API load means fewer rate limit errors and more reliable operations

- Faster perceived response times: Instant cache responses make agents and workflows feel significantly more responsive

- Lower costs: Fewer API calls directly translate to lower usage-based billing from API providers

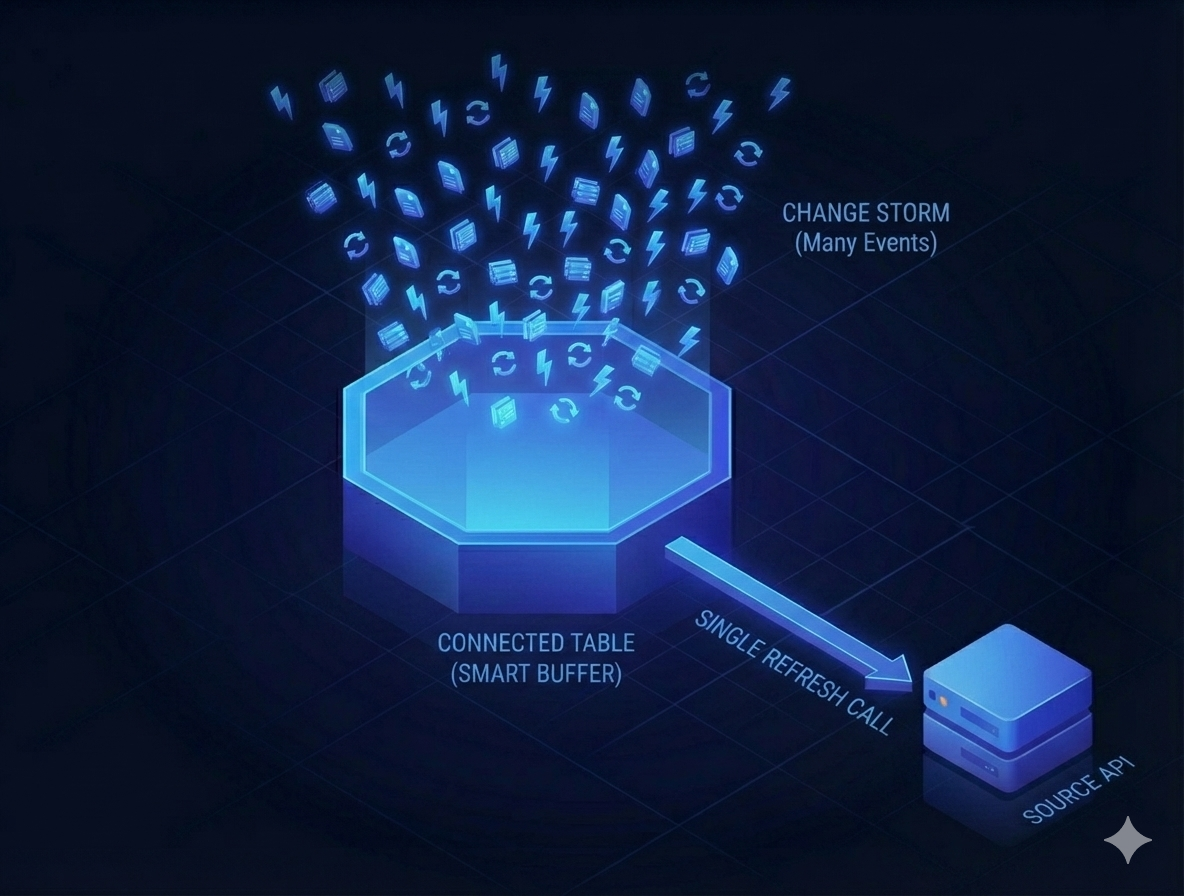

Compacting Change Storms

One of the most powerful features of Connected Tables is their ability to handle "change storms" - rapid sequences of updates to the same object. In traditional systems, each change event might trigger a separate API call, quickly exhausting rate limits.

Connected Tables solve this by coalescing multiple change events into a single refresh operation. When 10-100 change events arrive for the same object, we simply mark it as dirty once. The next time that object is accessed, we make a single API call to fetch the latest state, regardless of how many intermediate changes occurred.

This approach is particularly valuable for objects that change frequently, such as tickets being updated by multiple team members, or records being modified by automated processes. Instead of making dozens of API calls to track every intermediate state, you make one call to get the final, current state when you actually need it.

Handling Change: Event‑First, Fetch‑Later

On a webhook, we record minimal event metadata and mark the row dirty. We do not retrieve the full object yet, and many webhook events do not return the full payload. This is because if there are lots of changes being made on an object, it might generate lots of individual change events. Retrieving the full object after each change event might be a billed call.

During query‑time access or periodic sweeps, we coalesce all pending events for that object into a single refresh. When a partner lacks webhooks, we fall back to adaptive polling with backoff and cache validators such as ETags or If‑None‑Match. The point is to let events tell you what changed and let reads decide when to fetch.

No Webhooks? Just‑in‑Time Polling

Some platforms don't offer webhooks. In those cases, we do not run a constant polling loop. Instead, after the initial import or first data fill, we poll on demand to refresh only when a read path actually needs the object. Practically, this means:

- A caller requests an object that is missing or beyond its staleness window

- We perform a targeted poll for that object or a small batch around it

- We upsert the result and serve it immediately, then mark it clean

This avoids needless background updates for data that may never be read, and it naturally coalesces many upstream changes into a single fetch when the object is finally accessed.

How to Adopt This Pattern

Start by enabling Connected Tables for the import and sync steps that touch your heaviest objects in the workflow builder. Integry will handle data import, webhook registration, object state tracking and updates. Your workflow will access data normally, and the underlying connected table will decide on which data to return.

Sequence Diagram: Read with Lazy Refresh

Closing Thought

Data APIs are the lifeblood for practical business agents and should be respected accordingly. Connected Tables improve user experience by cutting down latency and reduce downstream load on API providers. That's how you deliver fast experiences for users, stay a good citizen to your partners, and cut down usage billing.

Interested in implementing this architecture for your API or agent integration? Please get in touch with us to learn more about how Connected Tables can transform your integration strategy.

Tags

Ready to get started?

Learn how Integry can help you build integrations faster and more efficiently with Connected Tables.

Schedule a Demo